AI-Powered Protein Design: Revolutionizing Therapeutic Development with Machine Learning

This article provides a comprehensive overview of how artificial intelligence and machine learning are transforming the field of therapeutic protein design.

AI-Powered Protein Design: Revolutionizing Therapeutic Development with Machine Learning

Abstract

This article provides a comprehensive overview of how artificial intelligence and machine learning are transforming the field of therapeutic protein design. Tailored for researchers, scientists, and drug development professionals, we explore the foundational concepts, including deep learning architectures and the shift from structure-based to sequence-based design. We detail key methodologies like RFdiffusion and ProteinMPNN, their applications in creating novel enzymes, antibodies, and vaccines, and address common challenges in model training, data scarcity, and protein stability. Finally, we examine rigorous validation techniques and compare leading AI platforms, culminating in a synthesis of current achievements and future clinical implications, offering a roadmap for integrating AI into next-generation biotherapeutics pipelines.

From Sequences to Structures: Core AI Concepts Transforming Protein Design

Within therapeutic research, the central thesis is that machine learning (ML) and artificial intelligence (AI) are not merely incremental improvements but represent a foundational paradigm shift from reductionist, manual design to holistic, predictive generation of functional proteins. Traditional rational design operates on limited human-defined rules, while AI leverages high-dimensional pattern recognition across entire protein sequence space to discover novel solutions beyond human intuition.

Comparative Analysis: Core Methodologies & Data

Table 1: Paradigm Comparison: Traditional Rational vs. AI-Driven Design

| Aspect | Traditional Rational Design | AI-Driven Design |

|---|---|---|

| Philosophical Basis | Reductionist; structure-determines-function. | Holistic; statistical pattern recognition in sequence-structure-function landscape. |

| Starting Point | Known 3D structure of a natural template (e.g., wild-type protein). | Can start from scratch (de novo), a motif, or a disordered sequence. |

| Key Drivers | Site-directed mutagenesis based on evolutionary alignment, mechanistic hypotheses, & biophysical principles (e.g., ΔΔG calculations). | Generative models (e.g., ProteinMPNN, RFdiffusion), protein language models (e.g., ESM-2), and structure predictors (AlphaFold2, RosettaFold). |

| Throughput & Scale | Low-to-medium; iterative cycles of design, build, test for handfuls of variants. | High; can generate, in silico screen, and rank thousands to millions of designs in one cycle. |

| Primary Success Metric (Therapeutics) | Improved binding affinity (KD), stability (Tm), or activity (kcat/KM) of a known scaffold. | Discovery of novel folds, functional sites, and binders with no natural precedent. |

| Quantitative Success Rate | ~5-15% of designed variants show desired improvement. | ~20-50% of AI-generated proteins express and fold correctly, with ~1-10% showing high target function in first-round experimental validation. |

| Major Limitation | Heavily constrained by prior knowledge; poor at exploring novel conformations. | Training data dependency; potential for "hallucinations" that are physically unrealistic. |

Table 2: Quantitative Benchmark: Design of Novel Protein Binders

| Design Target | Traditional Method (e.g., Rosetta) | AI Method (e.g., RFdiffusion/ProteinMPNN) | Reported Outcome |

|---|---|---|---|

| SARS-CoV-2 Spike RBD | Months of design cycles; low yield of high-affinity miniproteins. | Weeks of in silico generation; high yield. | AI: Multiple designs with KD < 100 nM, some < 10 nM. (Nature, 2023) |

| Cancer Antigen (e.g., HER2) | Focus on humanization and affinity maturation of existing antibodies (mAbs). | De novo design of small binding proteins to epitopes inaccessible to mAbs. | AI: Novel binders with sub-nanomolar affinity and enhanced tissue penetration. |

| G Protein-Coupled Receptor (GPCR) | Extremely challenging due to dynamic structure; limited success. | Diffusion models conditioned on inactive/active states. | AI: First de novo designed agonists and positive allosteric modulators for specific GPCRs. (Science, 2024) |

Detailed Application Notes & Protocols

Protocol 1: Traditional Rational Design for Thermostabilization

Aim: Increase the melting temperature (Tm) of an enzyme by 10°C. Workflow:

- Template Analysis: Obtain crystal structure of wild-type (WT) enzyme. Perform molecular dynamics (MD) simulation to identify flexible regions.

- Evolutionary Analysis: Run multiple sequence alignment (MSA) of homologous proteins. Identify conserved residues and positions with correlated mutations.

- Hypothesis Generation: Select 5-10 target positions for mutagenesis based on: (a) Replacing flexible, non-conserved residues with Proline (rigidifies), (b) Introducing salt bridges or disulfide bonds in flexible loops.

- Energy Calculation: Use computational tools like Rosetta or FoldX to calculate predicted ΔΔG of folding for each single-point mutant.

- Library Construction: Use site-directed mutagenesis (e.g., KLD method) to create the 5-10 single mutants.

- Expression & Purification: Express each variant in E. coli and purify via His-tag affinity chromatography.

- Validation: Measure Tm via differential scanning fluorimetry (DSF). Test activity via spectrophotometric assay.

Protocol 2: AI-DrivenDe NovoBinder Design

Aim: Generate a novel small protein that binds to a therapeutically relevant target with high affinity and specificity. Workflow:

- Target Specification: Define the target protein's structure (experimental or AlphaFold2 prediction) and specify the binding epitope (a set of residues).

- Conditional Generation: Use a diffusion model (e.g., RFdiffusion) conditioned on the target epitope coordinates to generate 1,000-10,000 backbone scaffolds that geometrically complement the surface.

- Sequence Design: For each generated backbone, use a protein language model (e.g., ProteinMPNN) to design the optimal amino acid sequence that stabilizes the fold and the interface. Filter designs by in silico confidence scores (pLDDT, pAE).

- In Silico Screening: Dock top 500 designs back to the target using fast docking tools (e.g., AlphaFold-Multimer) and rank by predicted interface score (pTM or iScore). Select top 50-100 designs for experimental testing.

- DNA Synthesis & High-Throughput Build: Encode selected designs into genes and synthesize via pooled oligo library synthesis.

- HT Expression & Screening: Use a yeast surface display or mammalian cell display system to screen the library for target binding. Isulate hits via fluorescence-activated cell sorting (FACS).

- Characterization: Express and purify hits. Characterize via Surface Plasmon Resonance (SPR) for kinetics (KD, kon, koff) and DSF for stability.

Visualizations

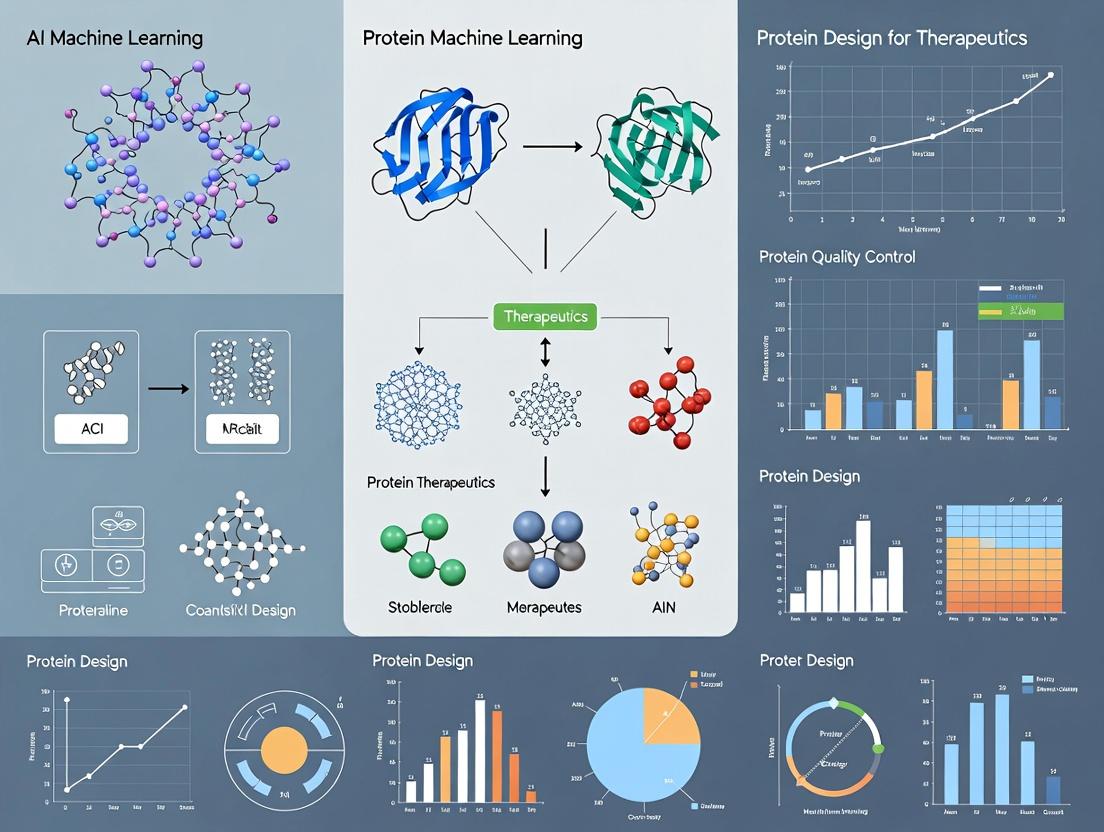

Title: Workflow Comparison: Traditional vs. AI Protein Design

Title: AI De Novo Binder Design Protocol Flow

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Material | Function in Protein Design | Example/Supplier |

|---|---|---|

| Rosetta Software Suite | Computational modeling for energy calculation, docking, and traditional design. | University of Washington RosettaCommons. |

| AlphaFold2 / ColabFold | Accurate protein structure prediction from sequence; essential for target and design analysis. | DeepMind; Public Colab notebooks. |

| RFdiffusion & ProteinMPNN | AI models for de novo backbone generation and sequence design, respectively. | Publicly available on GitHub (Baker Lab). |

| NEB Gibson Assembly Master Mix | Seamless cloning of designed gene variants into expression vectors. | New England Biolabs. |

| Cytiva HisTrap HP Columns | Standardized affinity purification of His-tagged recombinant protein variants. | Cytiva. |

| Promega Nano-Glo HiBiT Blotting System | Rapid, high-sensitivity quantitation of protein stability and solubility in lysates. | Promega. |

| Cytiva Biacore 8K Series | Gold-standard SPR system for label-free kinetics (KD) analysis of protein-protein interactions. | Cytiva. |

| Unchained Labs Uncle | High-throughput thermal stability (Tm) and aggregation measurement. | Unchained Labs. |

| Twist Bioscience Gene Synthesis | Reliable synthesis of designed gene sequences, including large variant libraries. | Twist Bioscience. |

| ClonePlus Yeast Display Kit | Display and screening platform for isolating high-affinity binders from designed libraries. | ProteoGen. |

Application Notes for Protein Design

1. Convolutional Neural Networks (CNNs) Primary Therapeutic Application: Local structural motif and binding pocket prediction from protein 2D contact maps or 3D voxelized grids. CNNs excel at identifying spatial hierarchies and local patterns critical for understanding secondary structure elements (alpha-helices, beta-sheets) and catalytic sites.

2. Transformers (Attention-Based Models) Primary Therapeutic Application: Sequence-to-property prediction and de novo protein sequence generation. By processing entire amino acid sequences with self-attention, Transformers model long-range dependencies crucial for understanding non-local interactions that determine protein folding and function.

3. Diffusion Models Primary Therapeutic Application: Generative design of novel protein backbones and 3D structures. These probabilistic models iteratively refine noise into valid structures, enabling the sampling of diverse, thermodynamically stable protein folds conditioned on desired functional specifications.

Table 1: Performance Benchmarks of Architectures on Key Protein Design Tasks

| Architecture | Task (Dataset) | Key Metric | Reported Performance | Year |

|---|---|---|---|---|

| CNN (3D ResNet) | Protein-Ligand Affinity Prediction (PDBBind) | Pearson's R | 0.82 | 2023 |

| Transformer (ProteinBERT) | Protein Function Prediction (Gene Ontology) | F1 Max | 0.65 | 2022 |

| Diffusion Model (RFdiffusion) | De Novo Protein Scaffold Design | Design Success Rate | ~20% (high accuracy) | 2023 |

| Geometric CNN | Protein-Protein Interface Prediction (DockGround) | AUC-ROC | 0.91 | 2024 |

| Transformer (ESM-2) | Variant Effect Prediction | Spearman's ρ | 0.73 | 2023 |

| Diffusion (Chroma) | Protein Complex Generation | Tm Score (>0.5) | 41% | 2023 |

Table 2: Computational Resource Requirements for Training

| Architecture | Typical Model Size (Params) | Minimum GPU VRAM | Approx. Training Time (Dataset Size) |

|---|---|---|---|

| 2D/3D CNN | 10M - 100M | 8 GB | 2-5 days (~100k samples) |

| Standard Transformer | 100M - 10B | 40 GB+ | 1-4 weeks (~1M sequences) |

| Diffusion Model (Protein) | 50M - 500M | 24 GB+ | 1-3 weeks (~100k structures) |

Experimental Protocols

Protocol 1: Training a CNN for Binding Pocket Detection Objective: Train a 3D CNN to identify and segment ligand-binding pockets from protein structure voxel grids.

- Data Preparation: Obtain protein structures from the PDB. Pre-process into 1Å-resolution 3D grids (e.g., 64x64x64 voxels). Channels represent atom type densities (C, N, O, S, etc.) and electrostatic potential.

- Label Generation: Use a tool like

fpocketto generate ground-truth binary masks for binding pockets. - Model Architecture: Implement a 3D U-Net with residual blocks. Use 3D convolutions, batch normalization, and ReLU activations.

- Training: Loss: Dice Loss. Optimizer: Adam (lr=1e-4). Batch size: 8 (subject to VRAM). Train for 200 epochs with early stopping.

- Validation: Evaluate on held-out set using DICE coefficient and precision-recall on voxel-wise segmentation.

Protocol 2: Fine-Tuning a Transformer for Stability Prediction Objective: Adapt a pre-trained protein language model (e.g., ESM-2) to predict the thermostability (ΔΔG) of protein variants.

- Base Model: Load the

esm2_t30_150M_UR50Dmodel and its tokenizer. - Dataset: Use the S669 or a proprietary variant stability dataset. Format:

[wildtype_sequence], [mutation], [experimental_ddG]. - Input Representation: For a variant "M1A", construct input as

<seq>: M1Aor use a specialized token. The wildtype sequence is tokenized. - Head Addition: Replace the LM head with a regression head (pooled output -> linear layer -> single output).

- Training: Freeze most transformer layers, only fine-tune the last 2 layers and the head. Loss: Mean Squared Error. Optimizer: AdamW (lr=5e-5). Train for 10-20 epochs.

Protocol 3: Generating Novel Protein Folds with a Diffusion Model Objective: Use a conditional diffusion model (e.g., RFdiffusion) to generate a backbone structure for a specified function.

- Environment Setup: Install the RFdiffusion software (e.g., from GitHub repository) and its dependencies, including PyRosetta or AlphaFold2 for structure refinement.

- Conditioning: Define the functional motif. This could be a specified protein-protein interface (via a motif pdb), a catalytic triad geometry, or a set of secondary structure constraints.

- Inference: Run the diffusion model with the appropriate conditioning flags (e.g.,

--contigs="A1-100",--binders="B1-50"for a binder). The model will perform iterative denoising from a random cloud of Ca atoms. - Post-processing & Filtering: Refine the generated backbone with AlphaFold2 to predict sidechains and an all-atom structure. Filter outputs using predicted local-distance difference test (pLDDT) and/or predicted template modeling (pTM) scores (>0.7 acceptable).

- Validation: Run in silico docking if applicable, and molecular dynamics (MD) simulations (≥100 ns) to assess stability.

Visualizations

CNN Protein Analysis Workflow

Transformer Self-Attention for Proteins

Diffusion Model Denoising Process

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for ML-Based Protein Design

| Tool/Solution | Primary Function | Relevance to Architecture |

|---|---|---|

| AlphaFold2 (ColabFold) | Protein structure prediction from sequence. | Provides ground truth & validation for CNN/Diffusion models; fine-tuning base. |

| PyTorch / JAX | Deep learning frameworks. | Essential for implementing and training all CNN, Transformer, and Diffusion models. |

| ESM (Evolutionary Scale Modeling) | Pre-trained protein language models. | Transformer-based foundational models for transfer learning on therapeutic tasks. |

| RFdiffusion / Chroma | Diffusion models for protein generation. | Specialized software for de novo protein backbone and complex design. |

| Rosetta / PyRosetta | Molecular modeling suite. | Used for physics-based refinement, scoring, and design validation post-ML generation. |

| MD Simulation (GROMACS/AMBER) | Molecular Dynamics. | Critical for in silico validation of generated proteins' stability and dynamics. |

| PDB & UniProt | Public protein structure/sequence databases. | Primary sources of training data for all architectures. |

| Docker/Singularity | Containerization. | Ensures reproducibility of complex ML and molecular modeling pipelines. |

Within the paradigm of AI-driven therapeutic protein design, the selection and representation of structural data are foundational. This document provides application notes and protocols for utilizing the Protein Data Bank (PDB) and AlphaFold Database (AFDB) as primary data sources, focusing on their integration into machine learning pipelines for structure-based design and functional prediction.

Table 1: Core Characteristics of PDB and AlphaFold DB (as of 2024)

| Feature | Protein Data Bank (PDB) | AlphaFold DB (EMBL-EBI) |

|---|---|---|

| Primary Content | Experimentally determined 3D structures (X-ray, Cryo-EM, NMR). | Computationally predicted protein structures (AI/DeepMind). |

| Size (Entries) | ~220,000 (with redundancy). | >200 million (proteome-scale predictions). |

| Resolution (Typical) | Atomic (e.g., 1.0Å - 3.5Å for X-ray). | Predicted Local Distance Difference Test (pLDDT) score (0-100). |

| Metadata | Rich experimental details, ligands, crystallization conditions. | Prediction metadata (pLDDT, per-residue confidence, predicted aligned error). |

| Key File Format | PDB, mmCIF. | PDB, mmCIF (with custom fields for confidence metrics). |

| Therapeutic Relevance | Gold standard for binding sites, drug-protein complexes, mechanistic studies. | Enables work on proteins with no experimental structure (e.g., novel targets, orphan receptors). |

| Update Frequency | Weekly. | Major releases quarterly, with periodic updates. |

| Access | REST API, FTP, RCSB PDB website. | REST API, Google Cloud Public Dataset, AFDB website. |

Table 2: Key Confidence Metrics in AlphaFold DB Outputs

| Metric | Range | Interpretation for Therapeutic Design |

|---|---|---|

| pLDDT | 0 - 100 | Per-residue confidence. >90: High (backbone reliable). 70-90: Confident (side chains may vary). <50: Low confidence (use with caution). |

| PAE (Predicted Aligned Error) | 0 - 30+ Å | Expected positional error between residues. Low inter-domain PAE suggests reliable relative orientation. |

| Model Confidence (Global) | High/Medium/Low | Overall model quality based on pLDDT distribution. |

Experimental Protocols

Protocol 3.1: Curating a High-Quality Structural Dataset for Training a Binding Site Predictor

Objective: Assemble a non-redundant set of protein-ligand complexes from the PDB for training a graph neural network.

Materials:

- High-performance computing cluster or cloud instance.

biopython,pandas,mdanalysisPython libraries.- RCSB PDB REST API access.

- PDB FTP archive.

Procedure:

- Query Generation: Use the RCSB API to query all structures with:

- Resolution ≤ 2.5 Å.

- Contains a non-polymeric ligand (HETATM records).

- No NMR structures.

- Release date after 2010.

- Example query:

https://search.rcsb.org/rcsbsearch/v2/query?json={"query":{"type":"group","logical_operator":"and","nodes":[{"type":"terminal","service":"text","parameters":{"attribute":"rcsb_entry_info.resolution_combined","operator":"less_or_equal","value":2.5}},{"type":"terminal","service":"text","parameters":{"attribute":"rcsb_entry_info.deposition_date","operator":"greater_or_equal","value":"2010-01-01"}},{"type":"terminal","service":"text","parameters":{"attribute":"rcsb_struct_symmetry.symbol","operator":"equals","value":"C1"}},{"type":"terminal","service":"text","parameters":{"attribute":"entity_poly.rcsb_entity_polymer_type","operator":"equals","value":"Protein"}}]},"return_type":"entry"}

Redundancy Reduction: Download the list of PDB IDs. Use MMseqs2 or CD-HIT at 40% sequence identity to cluster proteins. Select one representative structure per cluster, prioritizing higher resolution and newer deposition date.

Data Download & Processing: For each selected PDB ID:

- Download the structure file (mmCIF format recommended).

- Use

BiopythonorMDTrajto isolate the protein chain(s) and all non-water, non-ion ligands within 5Å of the protein. - Extract atomic coordinates, element types, and residue types.

- Parse the

site_recordin the PDB file to annotate known functional/binding sites.

Feature Encoding: For each residue/atom, compute and store:

- Geometric: Solvent accessible surface area (SASA), dihedral angles, secondary structure (DSSP).

- Chemical: One-hot encoding of residue type, atomic partial charges (from force field), hydrophobicity index.

- Neighborhood: Radial basis function (RBF) distances to k-nearest neighbors.

Graph Construction: Represent each complex as a graph where nodes are residues (or atoms) and edges connect residues within a 10Å cutoff. Node features are the computed descriptors; edge features include distance and direction vectors.

Protocol 3.2: Integrating AlphaFold DB Predictions for a Target of Unknown Structure

Objective: Obtain, validate, and prepare an AlphaFold-predicted structure for in silico docking.

Materials:

- AlphaFold DB REST API or Google Cloud Public Dataset access.

- Molecular visualization software (PyMOL, UCSF ChimeraX).

- Structure preparation software (OpenBabel, Schrödinger's Protein Preparation Wizard, or

pdbfixer).

Procedure:

- Retrieval: Query the AlphaFold DB (

https://alphafold.ebi.ac.uk/api/prediction/{UNIPROT_ID}) using the canonical UniProt identifier of your target. Download the ranked PDB files and the associated JSON file containing pLDDT and PAE data.

Confidence Assessment:

- Load the ranked model 1 in visualization software. Color the structure by the pLDDT b-factor field.

- Identify low-confidence regions (pLDDT < 70). These loops or termini may require modeling refinement or deletion for docking.

- Analyze the PAE matrix plot (from the JSON file) to check for domain-level errors. Low confidence in inter-domain orientation may necessitate using only a single, high-confidence domain.

Structure Preparation:

- Use

pdbfixerto add missing hydrogens at physiological pH (7.4). - If low-confidence regions are not near the putative active site (based on literature or homology), delete them to simplify the model.

- Run a brief energy minimization (e.g., using OpenMM or GROMACS with a simple force field like AMBERff14SB) to relieve steric clashes, keeping the majority of the backbone restrained to preserve the AF2 prediction.

- Use

Active Site Definition: If no experimental site is known, use computational methods (e.g., fpocket, DeepSite) on the prepared structure to predict potential binding pockets. Prioritize pockets with high conservation (from ConSurf analysis) and high average pLDDT scores.

Diagrams

Title: Data Flow from PDB and AlphaFold DB to ML Models

Title: Protocol for Using an AlphaFold DB Prediction

The Scientist's Toolkit

Table 3: Essential Research Reagents & Computational Tools

| Item | Category | Function in Protocol |

|---|---|---|

| RCSB PDB REST API | Web Service | Programmatic querying and metadata retrieval from the PDB. |

| MMseqs2 / CD-HIT | Software Tool | Rapid clustering of protein sequences to reduce dataset redundancy. |

| Biopython / MDTraj | Python Library | Parsing structural files (PDB, mmCIF), geometric calculations, and data extraction. |

| AlphaFold DB API | Web Service | Programmatic retrieval of predicted structures and confidence metrics. |

| PyMOL / UCSF ChimeraX | Visualization Software | Visual inspection of structures, coloring by confidence (pLDDT), and active site analysis. |

| PDBFixer / OpenBabel | Software Tool | Adding missing atoms, hydrogens, and performing basic structure cleanup. |

| OpenMM / GROMACS | Molecular Dynamics Engine | Energy minimization to relieve steric clashes in predicted models. |

| fpocket / DeepSite | Software Tool | Predicting potential ligand-binding pockets on protein surfaces. |

| PyTorch Geometric / DGL | Python Library | Building and training graph neural network models on structural data. |

The inverse folding problem—determining an amino acid sequence that will fold into a predetermined three-dimensional protein structure—represents a core challenge in computational biology. Within the broader thesis of employing AI and machine learning (ML) for protein design in therapeutics, solving this problem is pivotal. It enables the de novo design of novel protein therapeutics, enzymes, and vaccines with tailored functions and stabilities, moving beyond natural evolutionary constraints. Recent breakthroughs in deep learning architectures have transformed this field from a theoretical pursuit into a practical pipeline for drug development.

Current State of AI/ML Models for Inverse Folding

The following table summarizes key quantitative performance metrics for leading deep learning models in protein inverse folding, based on recent benchmarks.

Table 1: Performance Comparison of Recent Inverse Folding Models

| Model Name (Year) | Architecture Core | Key Training Data | Design Success Rate (Top-1 Recovery) | Sequence Recovery on Native Pairs | Computational Speed (Per Design) | Key Therapeutic Application Focus |

|---|---|---|---|---|---|---|

| ProteinMPNN (2022) | Message Passing Neural Network | CATH, PDB | ~52% (CATH 4.2) | ~33.5% | ~0.2 seconds | High-accuracy de novo scaffolds, symmetric assemblies |

| RFdiffusion (2023) | Diffusion Model + RosettaFold | PDB, synthetic | High (varies by task) | N/A | Minutes (GPU) | De novo binder design, motif scaffolding |

| ESM-IF1 (2022) | Inverse Folding Transformer | PDB | ~51% (CATH 4.2) | ~32.8% | Seconds | Fixed-backbone design, variant generation |

| Chroma (2023) | Diffusion Model (Latent) | PDB, AlphaFold DB | State-of-the-art on complex tasks | N/A | Minutes (GPU) | Large protein complexes, functional site design |

Core Experimental Protocol: Validating AI-Designed Sequences

This protocol details the experimental validation pipeline for sequences generated by inverse folding models, a critical step for therapeutic development.

Protocol: In Silico and In Vitro Validation of Designed Protein Sequences

Objective: To express, purify, and biophysically characterize a protein from an AI-designed sequence to confirm it adopts the target structure.

Materials & Reagents:

- Synthetic Gene Fragment: Codon-optimized for expression system (e.g., E. coli).

- Cloning Vector: (e.g., pET series with His-tag for purification).

- Competent Cells: For cloning (DH5α) and expression (BL21(DE3)).

- LB Media & Antibiotics: For bacterial culture.

- Induction Agent: Isopropyl β-d-1-thiogalactopyranoside (IPTG).

- Lysis & Purification Buffers: Including imidazole for immobilized metal affinity chromatography (IMAC).

- Size Exclusion Chromatography (SEC) Column: For final polishing.

- Circular Dichroism (CD) Spectrometer.

- Differential Scanning Calorimetry (DSC) or Fluorimeter.

- SEC-Multi-Angle Light Scattering (SEC-MALS) system.

Procedure:

Part A: In Silico Folding Confidence Check

- Input: Generate candidate sequences using your chosen inverse folding model (e.g., ProteinMPNN) and your target backbone structure (PDB file).

- Folding Prediction: Submit the designed sequences to a structure prediction network (e.g., AlphaFold2, ESMFold). Use the model's confidence metrics (pLDDT, pTM).

- Analysis: Select sequences where the predicted structure has a high root-mean-square deviation (RMSD) < 2.0 Å to the target backbone and high per-residue confidence (pLDDT > 80).

Part B: Gene Synthesis, Cloning, and Expression

- Gene Synthesis: Order the top 3-5 selected sequences as codon-optimized gene fragments.

- Cloning: Subclone each gene into an expression vector using restriction enzyme/ligation or Gibson assembly. Transform into cloning cells, screen colonies by colony PCR, and verify plasmid by Sanger sequencing.

- Expression: Transform verified plasmid into expression cells. Grow a 50 mL overnight culture, inoculate 1 L of main culture. Grow at 37°C to OD600 ~0.6-0.8, induce with 0.5-1.0 mM IPTG, and express at appropriate temperature (often 18-20°C) for 16-20 hours.

Part C: Purification and Biophysical Characterization

- Lysis and IMAC: Harvest cells by centrifugation, lyse by sonication, and clarify by centrifugation. Pass the supernatant over a Ni-NTA column. Wash with buffer containing 20-40 mM imidazole, elute with 250-500 mM imidazole.

- Polishing: Further purify the eluate by size exclusion chromatography (SEC). Analyze the SEC elution profile for a single, monodisperse peak.

- Secondary Structure (CD): Dilute purified protein to ~0.2 mg/mL in appropriate buffer. Acquire CD spectrum from 260-190 nm. Compare the spectrum's shape (double minima at ~208 nm & ~222 nm for α-helix) to that expected from the target structure.

- Thermal Stability (DSC/DSF):

- DSC: Load protein at >0.5 mg/mL. Perform a temperature ramp (e.g., 20-100°C). Record the melting temperature (Tm).

- DSF: Mix protein with a fluorescent dye (e.g., SYPRO Orange). Monitor fluorescence during a temperature ramp in a real-time PCR machine. Derive Tm from the inflection point.

- Oligomeric State (SEC-MALS): Inject purified sample onto an SEC column coupled to MALS and refractive index detectors. Calculate the absolute molecular weight from light scattering data to confirm the designed monomeric or multimeric state.

Expected Outcomes: A successfully designed protein will express solubly, purify as a single peak, exhibit a CD spectrum consistent with the target fold, display a high thermal stability (often Tm > 60°C), and confirm the intended oligomeric state.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents and Resources for Inverse Folding Experiments

| Item | Function & Relevance |

|---|---|

| High-Quality Structural Datasets (PDB, CATH, AlphaFold DB) | Training and benchmarking data for AI models. The non-redundancy and quality are critical. |

| ProteinMPNN Web Server / Codebase | Currently the most robust and widely used inverse folding model for fixed-backbone design. Accessible for non-specialists. |

| AlphaFold2 or ESMFold Colab Notebooks | Essential for the in silico confidence check, providing a rapid, low-cost filter for designed sequences before wet-lab experiments. |

| Codon-Optimized Gene Synthesis Service | Turns digital designs into physical DNA. Rapid synthesis (2-5 days) is key for iterative design-test cycles. |

| High-Throughput Cloning & Expression Kits (e.g., Ligation-Independent) | Accelerates the testing of multiple designed variants in parallel, essential for screening. |

| His-tag Purification Kits (IMAC) | Standardized, reliable first-step purification for tens to hundreds of designed proteins. |

| Pre-packed SEC Columns (e.g., Superdex series) | For assessing protein purity, monodispersity, and approximate size in a reproducible manner. |

| Differential Scanning Fluorimetry (DSF) Dyes (e.g., SYPRO Orange) | Enables medium-to-high-throughput thermal stability screening of purified designs in a plate reader format. |

Visualizations

AI-Driven Inverse Folding & Validation Workflow

AI Model Categories for Inverse Protein Design

Within the broader thesis on AI and machine learning for protein design for therapeutics, this document details the application of generative AI to create novel protein folds and functions de novo. This approach moves beyond natural protein libraries, enabling the discovery of unique protein scaffolds and binders with therapeutic potential.

Core Generative Models: Architectures and Quantitative Benchmarks

Current methods primarily leverage deep generative models trained on the Protein Data Bank (PDB). Key architectures and their performance metrics are summarized below.

Table 1: Comparison of Key Generative AI Models for De Novo Protein Design

| Model Name | Model Architecture | Key Feature | Reported Success Rate (Experimental Validation) | Typical Design Cycle Time | Primary Application |

|---|---|---|---|---|---|

| RFdiffusion | Diffusion Model (built on RosettaFold) | Conditional generation based on structural motifs | ~20% (for symmetric assemblies) | Hours to days | Symmetric scaffolds, binder design |

| Chroma | Diffusion Model (SE(3)-Equivariant) | Geometry-aware, conditioning on various properties (symmetry, function) | High (per cited examples) | Minutes to hours | Multi-conditional design (scaffolds, enzymes) |

| ProteinMPNN | Graph Neural Network (GNN) | Fast sequence design for backbones | >50% (sequence recovery on native backbones) | Seconds | Inverse folding (sequence design) |

| AlphaFold2 (as validation tool) | Transformer/Evoformer | State-of-the-art structure prediction | N/A (used for validation) | Minutes per structure | In silico validation of designed proteins |

| ESM-2/ESMFold | Large Language Model (Transformer) | Sequence-to-structure generation & prediction | N/A | Seconds to minutes | Co-design of sequence & structure |

Detailed Protocol: Generating a Novel Protein Scaffold with RFdiffusion

This protocol outlines the steps for generating a novel symmetric protein scaffold.

Materials & Software (The Scientist's Toolkit)

Table 2: Essential Research Reagent Solutions & Computational Tools

| Item Name | Provider/Software | Function in Protocol |

|---|---|---|

| RFdiffusion Colab Notebook | The Rosetta Commons / Sergey Ovchinnikov Lab | Primary interface for running RFdiffusion with default parameters. |

| AlphaFold2 (Local Installation or Colab) | DeepMind / Jumper et al. | In silico validation of generated protein models. |

| PyMOL or ChimeraX | Schrödinger / UCSF | Visualization and analysis of 3D protein structures. |

| PDB File of Motif (Optional) | Protein Data Bank (rcsb.org) | Provides a structural "seed" for conditional generation (e.g., a binding site). |

| Cloning Vector (e.g., pET series) | Novagen / Addgene | For downstream experimental expression of designed sequences. |

| E. coli Expression Cells (BL21(DE3)) | Thermo Fisher, New England Biolabs | Heterologous protein expression host. |

| Ni-NTA Resin | Qiagen, Cytiva | Purification of His-tagged designed proteins. |

| Size Exclusion Chromatography Column | Cytiva (Superdex series) | Polishing step to isolate monodisperse protein. |

Step-by-Step Procedure

Step 1: Define Design Goal and Parameters

- Objective: Specify desired symmetry (e.g., C2, D3), approximate size (number of residues), and any required functional motifs.

- Input Preparation: If conditioning on a motif, prepare a PDB file of the target motif or specify the desired protein-protein interface.

Step 2: Run RFdiffusion Generation

- Access the RFdiffusion Colab notebook.

- Set parameters in the

inferencesection:contigs: Define the scaffold. E.g.,"80-120"for a 80-120 residue chain.symmetry: Define symmetry. E.g.,"C3"for cyclic symmetry with 3 copies.hotspot_res: (Optional) Specify residues from a motif PDB to guide generation.

- Execute the diffusion sampling. The model will generate multiple (e.g., 100) backbone structures in PDB format.

Step 3: Sequence Design with ProteinMPNN

- Feed the generated backbone PDBs into ProteinMPNN.

- Run ProteinMPNN to design optimal amino acid sequences that stabilize each backbone.

- Output: A set of designed protein sequences (FASTA format) paired with their backbone structures.

Step 4:In SilicoValidation with AlphaFold2

- Input the designed FASTA sequences into AlphaFold2.

- Run structure prediction. The critical output is the predicted local distance difference test (pLDDT) score.

- Analysis: Select designs where the AlphaFold2-predicted structure closely matches the generative model's backbone (RMSD < 2.0 Å) and has high average pLDDT (>80).

Step 5: Experimental Expression and Purification (Abridged Protocol)

- Gene Synthesis & Cloning: Order selected sequences as gene fragments and clone into an expression vector (e.g., pET-28a with a His-tag).

- Transformation: Transform plasmid into expression host (e.g., E. coli BL21(DE3)).

- Expression: Grow culture to OD600 ~0.6, induce with 0.5 mM IPTG, and express at 18°C for 16-18 hours.

- Purification:

- Lyse cells via sonication in lysis buffer (e.g., 50 mM Tris, 300 mM NaCl, pH 8.0).

- Purify soluble protein using Ni-NTA affinity chromatography.

- Further purify via size-exclusion chromatography (SEC).

- Validation: Analyze SEC elution profile for monodispersity. Confirm structure via Circular Dichroism (secondary structure) and/or SEC-MALS (oligomeric state).

Signaling Pathway for Functional Protein Design

Diagram Title: AI-Driven Design Cycle for Therapeutic Proteins

Workflow forDe NovoProtein Generation & Validation

Diagram Title: De Novo Protein Design and Validation Workflow

Tools in Action: Applying AI Models to Design Real-World Therapeutics

De Novo Enzyme Design for Catalysis and Degradation

Within the broader thesis of applying AI and machine learning (ML) to protein design for therapeutics, de novo enzyme design represents a frontier with profound implications. This capability shifts the paradigm from discovering natural enzymes to computationally inventing proteins with tailored catalytic functions. For drug development, this enables the creation of therapeutic enzymes for metabolite clearance, prodrug activation, or degradation of pathological agents, moving beyond traditional small-molecule inhibitors. The integration of deep learning models for structure prediction (e.g., AlphaFold2, RosettaFold) and generative models for sequence design (e.g., ProteinMPNN, RFdiffusion) has dramatically accelerated the design-build-test-learn cycle, making the rational engineering of catalysts for novel reactions a tangible reality.

Core Application Notes

Key Design Strategies and Outcomes

De novo enzyme design workflows typically follow a reaction-driven approach: 1) Define the reaction mechanism and transition state (TS), 2) Generate a idealized active site (theozyme) complementary to the TS, 3) Scaffold the theozyme into a stable protein backbone, and 4) Iteratively refine the design using ML.

Table 1: Quantitative Performance Benchmarks of Recent De Novo Designed Enzymes

| Target Reaction | Design Method | Initial kcat/KM (M⁻¹s⁻¹) | After Directed Evolution | Therapeutic Relevance |

|---|---|---|---|---|

| Kemp Elimination | ROSETTA + Theozyme | 10² - 10³ | 10⁵ | Model for catalytic principles |

| Retro-Aldol Reaction | ROSETTA + Theozyme | 0.04 | 3.4 x 10⁴ | C-C bond cleavage for degradation |

| Non-native C-H Amination | ROSETTA + ML-guided active site packing | N.D. | 1,030 TTN | Potential for synthetic metabolite production |

| Hydrolysis of Organophosphates (e.g., paraoxon) | RFdiffusion + ProteinMPNN | Detectable activity in top designs | Under investigation | Nerve agent detoxification |

| Degradation of β-Lactam Antibiotics | Sequence-based generative models | Variant-dependent | >100-fold improvement | Addressing antibiotic resistance |

AI/ML Toolbox for Enzyme Design

Generative Models: RFdiffusion and Chroma generate novel protein backbones conditioned on functional site constraints. Sequence Design Models: ProteinMPNN and ESM-IF provide high-probability, stable sequences for given backbones. Fitness Prediction Models: Models like ESM-2 and GEMME can predict stability and functional scores, prioritizing designs for experimental testing.

Detailed Experimental Protocols

Protocol: Computational Design of a Hydrolase for Plastic Degradation (PETase Mimetic)

Objective: Design a novel enzyme capable of hydrolyzing polyethylene terephthalate (PET) ester bonds.

Materials:

- Hardware: High-performance computing cluster with GPU access.

- Software: PyRosetta, RFdiffusion/Chroma, ProteinMPNN, AlphaFold2, MD simulation suite (e.g., GROMACS).

Procedure:

- Theozyme Construction:

- Define the hydrolytic reaction coordinates (nucleophilic attack, tetrahedral intermediate, bond cleavage).

- Using quantum mechanics (QM) software (e.g., Gaussian), optimize the geometry of the transition state analog (TSA).

- Manually or using PLACEHOLDER, arrange a minimal set of catalytic residues (e.g., Ser-His-Asp triad, oxyanion hole donors) around the TSA with ideal geometries.

Active Site Scaffolding with RFdiffusion:

- Format the theozyme residues (Cα atoms and sidechain conformers) as a motif input for RFdiffusion.

- Run RFdiffusion in "motif-scaffolding" mode to generate hundreds of novel protein backframes that precisely position the motif.

- Apply distance and angle constraints to maintain catalytic geometry.

Sequence Design with ProteinMPNN:

- For each generated backbone, run ProteinMPNN in "fixed residues" mode, freezing the identities and conformations of the catalytic motif residues.

- Generate multiple sequence variants for each scaffold, selecting outputs with high confidence scores.

In Silico Validation:

- Fold each designed sequence using AlphaFold2 or RosettaFold. Discard designs with low confidence (pLDDT < 80) or poor motif geometry.

- Perform molecular docking of the substrate (e.g., bis(2-hydroxyethyl) terephthalate, BHET) into the predicted structure.

- Run short, targeted MD simulations to assess active site stability and substrate binding. Select top 50 designs for experimental testing.

Protocol: High-Throughput Screening of Designed Enzymes

Objective: Express, purify, and assay computationally designed enzymes for catalytic activity.

Materials:

- Reagent Solutions: See "The Scientist's Toolkit" below.

- Equipment: Robotic liquid handler, microplate spectrophotometer/fluorometer, FPLC system, SDS-PAGE equipment.

Procedure:

- Gene Synthesis and Cloning:

- Synthesize genes encoding the top 50 designs, codon-optimized for E. coli expression. Clone into a T7 expression vector (e.g., pET series) with a C-terminal His-tag.

Parallel Expression and Purification:

- Transform plasmids into BL21(DE3) E. coli cells. Inoculate 96-deep-well plates with auto-induction media.

- Grow at 37°C until OD600 ~0.6, then induce at 18°C for 18-24 hours.

- Lyse cells via sonication or chemical lysis in 96-well format.

- Purify proteins using immobilized metal affinity chromatography (IMAC) in a 96-well filter plate format. Elute with imidazole buffer.

- Desalt into assay buffer using spin columns. Confirm purity via SDS-PAGE.

Activity Screening:

- For Hydrolases: Use a fluorescent or chromogenic substrate analog (e.g., 4-nitrophenyl acetate for esterases). In a 384-well plate, mix enzyme with substrate.

- Monitor product formation kinetically (e.g., release of 4-nitrophenol at 405 nm) for 30 minutes.

- Calculate initial velocities. Designs showing activity above negative control (empty vector lysate) are considered "hits."

Hit Characterization:

- Scale up expression and purification of hit designs for detailed kinetic analysis (KM, kcat).

- Validate folding via circular dichroism (CD) spectroscopy.

- Initiate directed evolution (error-prone PCR, site-saturation mutagenesis) to improve activity and stability.

Visualizations

Diagram Title: AI-Driven Design-Build-Test-Learn Cycle for Enzyme Engineering

Diagram Title: Theozyme Construction Around a Transition State

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for De Novo Enzyme Workflows

| Reagent / Material | Function / Application | Key Considerations |

|---|---|---|

| T7 Expression Vector (e.g., pET series) | High-level, inducible protein expression in E. coli. | Codon optimization for design genes is critical for solubility. |

| Auto-induction Media | Simplified expression in deep-well plates; induces upon glucose depletion. | Enables high-throughput, parallel culture growth without manual induction. |

| Nickel-NTA Resin (IMAC) | Immobilized metal affinity chromatography for His-tagged protein purification. | 96-well filter plate format enables parallel mini-purifications. |

| Chromogenic/Fluorogenic Substrate Analogs (e.g., 4-NPA, 4-NPB) | High-sensitivity detection of hydrolytic activity in microplate assays. | Must mimic the target reaction's chemistry; used for primary screening. |

| Phusion/Ultra II Q5 Polymerase | High-fidelity PCR for cloning and site-directed mutagenesis. | Essential for generating libraries for directed evolution of initial hits. |

| Size-Exclusion Chromatography (SEC) Column (e.g., Superdex 75) | Analytical purification and assessment of protein monomericity/aggregation. | Confirms proper folding of designed enzymes post-IMAC. |

| Thermal Shift Dye (e.g., SYPRO Orange) | Measures protein thermal stability (Tm) in a real-time PCR instrument. | High-throughput stability assessment to filter poorly folded designs. |

| Rosetta/MPNN Software Suites | Computational protein design and sequence prediction. | Require significant GPU/CPU resources and structural biology expertise. |

AI-Driven Antibody and Nanobody Engineering for Enhanced Affinity & Stability

Application Notes

The integration of AI and machine learning (ML) into antibody engineering represents a paradigm shift in therapeutic discovery. Within the broader thesis of AI for protein design, these computational methods accelerate the development of biologics with superior binding affinity and thermal stability, directly addressing key challenges in drug development such as efficacy, manufactura, and shelf-life.

Core AI/ML Methodologies:

- Deep Learning Models for Structure Prediction: Tools like AlphaFold2 and RosettaFold provide high-accuracy structural models of antibody-antigen complexes, which serve as critical inputs for subsequent engineering steps.

- Generative Models for Sequence Design: Variational Autoencoders (VAEs) and Generative Adversarial Networks (GANs) are trained on known antibody sequence-structure-function datasets to generate novel, optimized sequences with desired properties.

- In-Silico Affinity Maturation: ML models, particularly graph neural networks (GNNs) trained on molecular dynamics (MD) simulations, predict the change in binding free energy ((\Delta\Delta G)) upon mutation, enabling rapid virtual screening of millions of variants.

- Stability Prediction Models: Learned representations from protein language models (e.g., ESM-2) are fine-tuned to predict thermal stability metrics like melting temperature ((T_m)) directly from sequence.

Key Quantitative Outcomes from Recent Studies:

Table 1: Reported Performance of AI-Driven Antibody Engineering

| Target / Property | Baseline Affinity (nM) | AI-Optimized Affinity (nM) | Fold Improvement | Stability Change ((\Delta T_m)) | Key AI Method |

|---|---|---|---|---|---|

| SARS-CoV-2 RBD | 5.2 | 0.056 | 93x | +3.2°C | GNN-based (\Delta\Delta G) prediction |

| HER2 | 10.1 | 0.71 | 14x | +5.1°C | VAE sequence generation & ranking |

| IL-6 Receptor | 3.8 | 0.21 | 18x | +4.5°C | Protein MPNN & RosettaDDG |

| Generic Nanobody | N/A | N/A | N/A | +7.8°C | ESM-2 fine-tuned for stability |

Table 2: Comparative Throughput of Traditional vs. AI-Enhanced Workflows

| Development Stage | Traditional Method | Typical Duration | AI-Enhanced Method | Typical Duration | Speed Gain |

|---|---|---|---|---|---|

| Lead Identification | Hybridoma / Phage Display | 3-6 months | In-silico Library Design & Screening | 2-4 weeks | ~4x |

| Affinity Maturation | Error-Prone PCR & Screening | 4-8 months | (\Delta\Delta G) ML Prediction & Validation | 3-6 weeks | ~6x |

| Developability Assessment | Low-throughput analytics | 1-2 months | ML-based prediction of viscosity, aggregation | <1 week | >8x |

Experimental Protocols

Protocol 1: In-Silico Affinity Maturation Using Graph Neural Networks (GNNs)

Objective: To generate and rank single-point mutations in the Complementarity-Determining Region (CDR) of an antibody for improved binding affinity.

Materials:

- Starting antibody-antigen complex structure (PDB file or AlphaFold2 prediction).

- High-performance computing (HPC) cluster or cloud instance with GPU acceleration.

- Software: PyTorch, PyTorch Geometric, Rosetta, or dedicated ML protein design suite.

Procedure:

- Structure Preparation: Clean the PDB file using PDBFixer or Chimera. Protonate the structure at pH 7.4 using H++ or PROPKA.

- Define Mutation Site: Isolate CDR residues (e.g., H3, L3) within 8Å of the antigen in the paratope.

- Generate Mutant Library: Create in-silico all possible single-point mutants (19 variants per residue) for the selected sites.

- Feature Extraction: For each mutant structure (modeled with Rosetta or ABACUS), generate a graph representation. Nodes (residues) are featurized with physicochemical properties, evolutionary scores from PSSM. Edges encode distances and angles.

- (\Delta\Delta G) Prediction: Input the graph representation into a pre-trained GNN model (e.g., DeepAb, ProteinMPNN for sequence, followed by AttentiveFP for affinity scoring). The model outputs a predicted (\Delta\Delta G) (kcal/mol) for each mutation.

- Ranking & Selection: Rank all mutants by predicted (\Delta\Delta G). Select top 20-50 stabilizing mutations (negative (\Delta\Delta G)) for experimental validation.

- In-Vitro Validation: Proceed to Protocol 3.

Protocol 2: Generative Design for Stabilized Nanobody Frameworks

Objective: To design a nanobody (VHH) sequence with enhanced thermal stability while maintaining a canonical fold.

Materials:

- Curated dataset of nanobody sequences with experimental (T_m) values.

- Access to a pre-trained protein language model (e.g., ESM-2, ProtGPT2).

- Fine-tuning environment (e.g., Hugging Face Transformers, JAX).

Procedure:

- Dataset Curation: Compile sequences and corresponding (T_m) data. Split into training (80%), validation (10%), test (10%) sets.

- Model Fine-Tuning: Fine-tune the ESM-2 model via transfer learning. The final hidden representation is fed into a regression head to predict (T_m).

- Latent Space Sampling: Use a conditioned VAE. The encoder maps input sequences to a latent vector

z, the decoder generates sequences fromz. The conditioning variable is the desired (T_m) (e.g., >75°C). - Sequence Generation: Sample latent vectors from a Gaussian distribution and decode them using the conditioned decoder to generate novel nanobody sequences.

- Filtration & Scoring: Filter sequences for:

- Correct framework residue conservation (Cys22, Cys92, Trp103, etc.).

- Low predicted immunogenicity (using NetMHCIIPan).

- High predicted stability from the fine-tuned ESM-2 model.

- Structure Validation: Fold top-ranked sequences (e.g., 20 designs) using AlphaFold2. Discard any with low pLDDT (<85) or non-canonical folds.

- Experimental Expression & Characterization: Proceed to Protocol 3.

Protocol 3: Experimental Validation of AI-Designed Variants

Objective: To express, purify, and characterize the affinity and stability of AI-designed antibody/nanobody variants.

Materials: See "Research Reagent Solutions" table below.

Procedure: A. Expression & Purification:

- Clone synthesized gene sequences into a mammalian expression vector (e.g., pcDNA3.4) for antibodies or a bacterial vector (e.g., pET series) for nanobodies.

- For antibodies: Transiently transfect Expi293F cells using Expifectamine, culture for 5-7 days. For nanobodies: Transform SHuffle T7 E. coli, induce with IPTG for cytoplasmic expression.

- Purify via affinity chromatography (Protein A for IgG, His-tag for nanobodies) followed by size-exclusion chromatography (SEC) on an ÄKTA system.

B. Affinity Measurement (Bio-Layer Interferometry - BLI):

- Dilute antigen to 10 µg/mL in kinetics buffer and load onto anti-His (for His-tagged antigen) or streptavidin (for biotinylated antigen) biosensors for 300s.

- Baseline in kinetics buffer for 60s.

- Associate with serially diluted antibody (e.g., 100 nM to 1.56 nM) for 300s.

- Dissociate in kinetics buffer for 400s.

- Fit association and dissociation curves using a 1:1 binding model to calculate the association rate ((k{on})), dissociation rate ((k{off})), and equilibrium dissociation constant ((K_D)).

C. Thermal Stability Analysis (Differential Scanning Fluorimetry - DSF):

- Mix purified protein with SYPRO Orange dye in a 96-well PCR plate.

- Perform a temperature ramp from 25°C to 95°C at 1°C/min in a real-time PCR instrument.

- Monitor fluorescence intensity. The inflection point of the fluorescence curve is the apparent melting temperature ((T_m)).

- Compare (T_m) of designed variants to the parental molecule.

Visualizations

Title: AI-Driven Affinity Maturation Workflow

Title: Generative AI Pipeline for Nanobody Stability

Research Reagent Solutions

Table 3: Essential Materials for Experimental Validation

| Item | Function / Role in Protocol | Example Product/Catalog |

|---|---|---|

| Expi293F Cells | Mammalian host for transient expression of full-length IgG antibodies. | Thermo Fisher Scientific, Cat# A14527 |

| SHuffle T7 E. coli | Bacterial host for cytoplasmic expression of disulfide-bonded nanobodies. | New England Biolabs, Cat# C3029J |

| Expifectamine 293 | High-efficiency transfection reagent for Expi293F system. | Thermo Fisher Scientific, Cat# A14524 |

| Ni-NTA Superflow | Affinity resin for purification of His-tagged antigens and nanobodies. | Qiagen, Cat# 30410 |

| Protein A Agarose | Affinity resin for capture of IgG antibodies from mammalian supernatant. | Thermo Fisher Scientific, Cat# 20334 |

| Superdex 75 Increase | Size-exclusion chromatography column for polishing and aggregation removal. | Cytiva, Cat# 29148721 |

| Anti-His (HIS1K) Biosensors | BLI biosensors for kinetics analysis of His-tagged antigens. | Sartorius, Cat# 18-5120 |

| SYPRO Orange Dye | Fluorescent dye for DSF, binds hydrophobic patches exposed upon unfolding. | Thermo Fisher Scientific, Cat# S6650 |

| pcDNA3.4 Vector | High-expression mammalian vector for antibody heavy and light chains. | Thermo Fisher Scientific, Cat# A14697 |

| pET-28a(+) Vector | Common bacterial expression vector for nanobody cloning with His-tag. | MilliporeSigma, Cat# 69864-3 |

Designing Novel Peptide Therapeutics and Vaccine Antigens

The advent of AI and machine learning (ML) has revolutionized de novo protein and peptide design, transitioning from structure-guided empirical methods to predictive, sequence-first approaches. This paradigm shift, exemplified by models like AlphaFold2, RFdiffusion, and ProteinMPNN, enables the rapid generation of novel peptide binders, stabilizers, and immunogens with high precision. This application note provides integrated wet-lab protocols and computational workflows for designing and validating peptide-based therapeutics and vaccine antigens, framed within an AI-augmented research pipeline.

Key AI/ML Platforms and Quantitative Performance

The following table summarizes current state-of-the-art tools and their benchmark performance in relevant design tasks.

Table 1: Performance Metrics of Key AI/ML Platforms for Peptide and Antigen Design

| AI/ML Tool | Primary Function | Key Metric | Reported Performance | Reference (Year) |

|---|---|---|---|---|

| AlphaFold2 | Structure Prediction | RMSD (Å) | ≤2.0 for many monomeric proteins | Jumper et al. (2021) |

| RFdiffusion | De Novo Protein/Peptide Design | Design Success Rate | ~10-20% high-affinity binders de novo | Watson et al. (2023) |

| ProteinMPNN | Sequence Design for Backbones | Sequence Recovery Rate | ~52% native sequence recovery | Dauparas et al. (2022) |

| ESM-2/ESMFold | Evolutionary-scale Modeling | Pseudo-perplexity | Enables functional site prediction | Lin et al. (2023) |

| ImmuneBuilder | Antibody & TCR Structure Prediction | RMSD (Å) | ~1.5 for CDR loops | Bennett et al. (2024) |

Integrated Protocol: AI-Guided Design of a Peptide Inhibitor

Protocol 3.1: In Silico Binder Design and Selection

- Objective: Generate a peptide inhibitor targeting the PD-1/PD-L1 interaction interface.

- Workflow:

- Target Analysis: Use AlphaFold2 or ESMFold to model the target protein complex (PD-1/PD-L1). Identify key interaction residues.

- Scaffold Generation: Input the target binding site (PD-L1) into RFdiffusion. Use the "conditioned hallucination" protocol to generate de novo peptide scaffolds (12-20 aa) that geometrically complement the site.

- Sequence Design: Feed the generated backbone structures into ProteinMPNN. Run multiple times (n=128) to produce diverse, low-energy, and foldable sequences for each scaffold.

- Filtration & Ranking: Filter sequences using:

- AgroPiCt: Predicts peptide aggregation propensity (threshold: <5%).

- NetMHCpan 4.1: For therapeutics, filter out sequences with high MHC-I binding affinity to reduce immunogenicity risk.

- AlphaFold2 (ColabFold): Perform quick relaxed complex prediction for top 50 sequences. Rank by predicted interface pLDDT (>85) and PAE (<5 Å at interface).

- Output: A final list of 5-10 candidate peptide sequences for synthesis.

Diagram: AI-Peptide Design Workflow

Experimental Validation Protocols

Protocol 4.1: Peptide Synthesis and Characterization

- Materials: See "The Scientist's Toolkit" below.

- Method:

- Synthesize peptides (≥95% purity) via standard Fmoc SPPS.

- Lyophilize and reconstitute in DMSO or suitable buffer.

- Confirm identity via LC-MS. Determine solubility via concentration series with OD600 measurement.

- Assess secondary structure via Circular Dichroism (CD) spectroscopy in PBS (pH 7.4).

Protocol 4.2: Binding Affinity Measurement (Surface Plasmon Resonance - SPR)

- Objective: Quantify binding kinetics of designed peptide to recombinant PD-L1.

- Chip: Series S CM5.

- Ligand: His-tagged PD-L1, immobilized via anti-His antibody capture (~100 RU).

- Analytes: Serially diluted peptides (0.78 nM - 200 nM) in HBS-EP+ buffer.

- Cycle: Contact time 120 s, dissociation time 300 s, regeneration with 10 mM Glycine pH 2.0.

- Analysis: Fit sensograms to a 1:1 Langmuir binding model using Biacore Evaluation Software to derive KD, ka, and kd.

Protocol 4.3: In Vitro Functional Assay (T-cell Activation)

- Objective: Test peptide's ability to block PD-1/PD-L1 and restore T-cell function.

- Co-culture: Use a PD-1/PD-L1 blockade bioassay (e.g., Jurkat T cells expressing PD-1 and a luciferase reporter under NFAT response element, co-cultured with CHO-K1 cells expressing PD-L1 and an antigen).

- Procedure: Add titrated peptide (0.1-100 µM) to co-culture. After 6h, measure luminescence. Calculate % restoration of signal relative to control with anti-PD-L1 mAb.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Peptide Therapeutic Development

| Item | Function | Example Product/Catalog |

|---|---|---|

| Fmoc-Protected Amino Acids | Building blocks for solid-phase peptide synthesis. | Merck Millipore, PepTech |

| Rink Amide MBHA Resin | Solid support for C-terminal amide peptide synthesis. | Aapptec AM-8000 |

| Recombinant Target Protein | For binding and functional assays. | Sino Biological (e.g., 10084-H08H for PD-L1) |

| Anti-His Capture Kit | For oriented immobilization in SPR. | Cytiva 28995056 |

| HBS-EP+ Buffer (10X) | Running buffer for SPR to minimize non-specific binding. | Cytiva BR100669 |

| PD-1/PD-L1 Blockade Bioassay | Ready-to-use cellular system for functional screening. | Promega J1250/J1581 |

| CD Spectrophotometer Cuvette | For secondary structure analysis. | Hellma 110-QS |

| LC-MS System | For purity and identity verification. | Agilent 1260 Infinity II/6125B |

AI-Enhanced Protocol: Epitope-Focused Vaccine Antigen Design

Protocol 6.1: Design of a Stabilized RSV F Protein Mimetic

- Objective: Design a single peptide that mimics the antigenic Site Ø of RSV F protein.

- Epitope Extraction: From a neutralizing antibody (e.g., D25)-RSV F co-crystal structure (PDB: 4MMS), extract the conformational epitope residues.

- Scaffold Grafting: Use the RFdiffusion "inpainting" protocol. Define the epitope residues as "motif" and the rest of a small protein scaffold (e.g., 60aa) as "designed." The model fills in sequences that stabilize the grafted epitope.

- Stability Optimization: Take the top designs and run in silico "fixed-backbone" sequence optimization with ProteinMPNN, with a foldability score from ESM-2.

- Immunogenicity Prediction: Use tools like NetMHCIIpan 4.0 and BepiPred-3.0 to predict CD4+ T helper epitopes within the designed construct, ensuring broad population coverage.

Diagram: Epitope-Focused Vaccine Design Logic

Concluding Remarks

These protocols illustrate a synergistic loop between AI-driven generative design and rigorous experimental validation. The integration of structure prediction (AlphaFold2), constrained generation (RFdiffusion), and sequence optimization (ProteinMPNN) drastically accelerates the design cycle for both peptide therapeutics and precision vaccine antigens, marking a new era in computational biotherapeutics.

This application note details a practical pipeline for de novo protein design, integrating the AI tools RFdiffusion and ProteinMPNN. Within the broader thesis of AI-driven therapeutic research, this workflow exemplifies the transition from computational sequence/structure generation to physical protein production and validation. The synergy of these two models—RFdiffusion for generating novel protein backbones and ProteinMPNN for designing optimal, foldable sequences—enables the rapid creation of binders, enzymes, and scaffolds with therapeutic potential.

Table 1: Key AI Tool Specifications and Performance Metrics

| Tool | Primary Function | Key Algorithm | Typical Runtime* | Success Rate (Experimental Validation) | Key Citation (Year) |

|---|---|---|---|---|---|

| RFdiffusion | Generates novel protein structures conditioned on user-defined constraints (symmetry, shape, motif scaffolding). | Diffusion model trained on the Protein Data Bank (PDB). | 1-10 hours (GPU-dependent) | ~20% (for high-affinity binders from de novo designs) | Watson et al., Nature, 2023 |

| ProteinMPNN | Designs optimal amino acid sequences for a given protein backbone structure. | Message Passing Neural Network (MPNN). | Seconds to minutes per design. | >50% (for sequences expressing and folding into target structure) | Dauparas et al., Science, 2022 |

| AlphaFold2 or RoseTTAFold | Structure prediction for validation of designed sequences. | Deep learning (Evoformer, 3D track). | Minutes to hours. | High accuracy (pLDDT > 70 often correlates with successful folding) | Jumper et al., Nature, 2021 |

*Runtimes are for standard protein lengths (<300aa) on a modern NVIDIA GPU (e.g., A100).

Detailed Experimental Protocols

Protocol 3.1: Computational Design of a Target-Binding Protein

Objective: Generate a de novo protein that binds to a target epitope (e.g., a viral spike protein).

Materials (Computational):

- Hardware: Workstation with NVIDIA GPU (≥ 16GB VRAM).

- Software: RFdiffusion (v1.1), ProteinMPNN (v1.0), PyMOL or ChimeraX, Conda environment manager.

- Input: PDB file of the target protein. Definition of the target site (residue numbers or coordinates).

Method:

- Target Site Preparation: Isolate the target epitope chain or define residues in a

contig_map.ptfile for RFdiffusion. - RFdiffusion Run: Execute a "motif scaffolding" run. Key command-line arguments: (This example designs a 150aa protein chain that interfaces with residues 10,12,20 on chain B of the target).

- Backbone Selection: Cluster the 100 output backbone structures (

.pdbfiles) based on RMSD. Select 5-10 diverse, well-folded backbones (no knots, reasonable angles). - Sequence Design with ProteinMPNN: For each selected backbone, generate 100 sequences.

- In silico Validation: Fold all designed sequences using AlphaFold2 (local or via ColabFold). Select sequences where the predicted structure (pLDDT > 80) closely matches (TM-score > 0.7) the original RFdiffusion backbone.

- Final Selection: Choose 5-10 sequences for synthesis based on folding confidence, diversity, and favorable binding interface properties (e.g., RosettaDock energy scores).

Protocol 3.2: Wet-Lab Production and Validation

Objective: Express, purify, and biophysically characterize the AI-designed proteins.

Materials (Wet-Lab):

- Gene Synthesis: Cloned genes in an expression vector (e.g., pET series for E. coli).

- Expression: BL21(DE3) competent cells, LB broth, IPTG.

- Purification: Ni-NTA agarose (for His-tagged proteins), AKTA FPLC system, size-exclusion chromatography (SEC) column.

- Validation: SDS-PAGE gel, Circular Dichroism (CD) spectrometer, Surface Plasmon Resonance (SPR) instrument (e.g., Biacore) or Bio-Layer Interferometry (BLI; e.g., Octet).

Method:

- Gene Synthesis and Cloning: Order genes for selected sequences in a T7 expression plasmid. Transform into expression host.

- Small-Scale Expression Test: Induce 50 mL cultures with IPTG. Analyze solubility via SDS-PAGE.

- Large-Scale Expression and Purification: Express soluble designs in 1L culture. Lyse cells, clarify lysate, and purify via affinity chromatography. Further polish by SEC.

- Biophysical Characterization:

- Purity/Monodispersity: Analyze SEC elution profile (single peak) and SDS-PAGE (single band).

- Folding: Collect CD spectrum. Look for minima at ~208nm and ~222nm (alpha-helical signature) consistent with the predicted structure.

- Binding (SPR/BLI): Immobilize target protein. Flow purified design over surface. Measure association/dissociation rates to derive binding affinity (KD). A successful de novo binder typically achieves KD in nM to µM range.

Visualization of Workflows and Pathways

Diagram 1: AI-Driven Protein Design and Validation Pipeline

Diagram 2: Key Binding Validation via Surface Plasmon Resonance

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Research Reagent Solutions for AI-to-Protein Workflow

| Item | Category | Function/Brief Explanation |

|---|---|---|

| NVIDIA GPU (A100/H100) | Hardware | Accelerates training and inference of large AI models (RFdiffusion, AlphaFold2). Essential for feasible runtime. |

| PyRosetta License | Software | Provides energy functions and docking algorithms for detailed in silico analysis and refinement of designs. |

| Codon-Optimized Gene Fragments | Molecular Biology | Synthetic DNA for expression of designed sequences. Codon optimization enhances expression yield in the chosen host (e.g., E. coli). |

| HisTrap HP Column | Protein Purification | Immobilized metal affinity chromatography (IMAC) column for rapid, tag-based purification of His-tagged designed proteins. |

| Superdex 75 Increase | Protein Purification | High-resolution size-exclusion chromatography (SEC) column for polishing and assessing monomeric state/oligomerization. |

| Circular Dichroism (CD) Spectrometer | Biophysics | Rapidly assesses secondary structure content and thermal stability, confirming proper folding of the designed protein. |

| Biacore T200 or Octet RED96e | Biophysics | Gold-standard (SPR) or high-throughput (BLI) instruments for label-free, quantitative measurement of binding kinetics (KD, kon, koff) to the target. |

| Cryo-Electron Microscope | Structural Biology | For high-resolution validation of the designed protein's structure, especially for complexes with their target. |

The integration of artificial intelligence (AI) with experimental characterization forms a critical, iterative pipeline for accelerating therapeutic protein design. This pipeline closes the loop between in silico prediction and in vitro/in vivo validation, enabling rapid hypothesis generation and testing. The core thesis is that ML-guided design cycles significantly reduce the experimental search space and increase the probability of discovering viable therapeutic candidates with desired properties (e.g., high affinity, stability, expressibility).

Application Note AN-001: Implementing this pipeline reduces the time from initial design to validated lead candidate by an estimated 60-70%, compared to traditional high-throughput screening alone. The key is the continuous flow of experimental data back into the AI models for retraining, creating a self-improving system.

Table 1: Quantitative Impact of Integrated AI-Experimental Pipelines

| Metric | Traditional Screening | AI-Integrated Pipeline | Improvement |

|---|---|---|---|

| Design-to-Test Cycle Time | 4-6 weeks | 1-2 weeks | ~75% faster |

| Candidate Hit Rate | 0.1 - 1% | 5 - 15% | >10x increase |

| Experimental Throughput Required | 10^4 - 10^6 variants | 10^2 - 10^3 variants | ~100-fold reduction |

| Typical Optimization Rounds | 5-8 | 2-3 | ~60% reduction |

Core Experimental Protocols

Protocol 2.1: High-Throughput Characterization of AI-Designed Protein Variants

Objective: To express, purify, and quantitatively assay a library of 100-500 AI-designed protein variants for binding affinity (KD) and thermal stability (Tm).

Materials: See "The Scientist's Toolkit" below. Procedure:

- Gene Synthesis & Cloning: Receive codon-optimized gene fragments for 500 variants. Use a robotic liquid handler to perform a Golden Gate assembly into a standardized expression vector (e.g., pET-29b+) in a 96-well plate format. Transform into high-efficiency E. coli cloning strain.

- Small-Scale Expression: Pick single colonies into 1.2 mL deep-well plates containing auto-induction media. Grow at 37°C, 900 rpm for 24 hours.

- Lysis and Purification: Pellet cells. Resuspend in lysis buffer (Lysozyme, Benzonase, protease inhibitor). Lyse via sonication or chemical lysis. Filter lysate through a 0.45 μm filter plate. Perform immobilized metal affinity chromatography (IMAC) using a nickel-chelate resin in a 96-well filter plate format. Elute with imidazole.

- Binding Affinity (KD) via Biolayer Interferometry (BLI):

- Hydrate Anti-His biosensors in buffer.

- Load: Dip sensors into purified protein samples (5 μg/mL) for 300s to achieve consistent loading.

- Baseline: Dip into buffer for 60s.

- Association: Dip into a solution of target antigen at a single, saturating concentration (e.g., 200 nM) for 300s.

- Dissociation: Dip into buffer for 300s.

- Analyze data using a 1:1 binding model. Rank variants by response units (RU) and estimated KD from single-concentration fit.

- Thermal Stability (Tm) via Differential Scanning Fluorimetry (DSF):

- Mix 10 μL of each purified protein (0.2 mg/mL) with 10 μL of 10X SYPRO Orange dye in a 96-well PCR plate.

- Perform a temperature ramp from 25°C to 95°C at 1°C/min in a real-time PCR machine.

- Analyze fluorescence curve to determine the melting temperature (Tm) for each variant.

Protocol 2.2: Data Curation for AI Model Retraining

Objective: To structure characterization data for effective retraining of protein sequence-function prediction models.

Procedure:

- Data Aggregation: Compile a CSV file with columns:

Variant_ID,Amino_Acid_Sequence,Expression_Yield_mg_L,BLI_Response_RU,Estimated_KD_nM,Tm_C. - Label Assignment: Assign qualitative labels based on thresholds (e.g., "High Binder": KD < 10 nM, "Stable": Tm > 65°C).

- Feature Engineering: Generate numerical feature representations for each sequence (e.g., one-hot encoding, physicochemical property vectors, or pre-trained deep learning embeddings from ESM-2).

- Dataset Splitting: Split data 80/10/10 into training, validation, and test sets, ensuring no data leakage between related variants.

- Model Retraining: Fine-tune a pre-trained protein language model (e.g., ProtGPT2, ESM-2) on the new aggregated dataset using a regression (for KD, Tm) or classification (for labels) head. Perform hyperparameter optimization on the validation set.

- Next-Generation Design: Use the retrained model to generate or rank a new set of 500 variants predicted to have improved properties, initiating the next cycle.

Visualization of Workflows and Pathways

Title: Closed-Loop AI-Protein Design Pipeline

Title: Detailed Workflow: From Gene to Data

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for AI-Driven Protein Characterization

| Item | Function in Pipeline | Example/Supplier |

|---|---|---|

| Codon-Optimized Gene Fragments | Provides the DNA starting material for the variant library, optimized for expression in the chosen host (e.g., E. coli). | Twist Bioscience, IDT |

| High-Throughput Cloning Kit | Enables parallel assembly of hundreds of expression constructs. | NEB Golden Gate Assembly Kit (96-well format) |

| Automated Liquid Handler | Essential for precision and reproducibility in plate-based setup for cloning, expression, and assay preparation. | Beckman Coulter Biomek, Opentrons OT-2 |

| Nickel-Cholate Resin Plates | For parallel IMAC purification of His-tagged proteins in a 96-well filter plate format. | Cytiva His MultiTrap FF plates |

| Biolayer Interferometry (BLI) System | Label-free kinetic binding analysis for medium-throughput affinity screening. | Sartorius Octet RED96e |

| Anti-His (HIS1K) Biosensors | BLI biosensors specific for capturing His-tagged proteins. | Sartorius HIS1K |

| Real-Time PCR Instrument with DSF capability | Measures protein thermal unfolding by monitoring fluorescence of a dye (e.g., SYPRO Orange). | Applied Biosystems QuantStudio, Bio-Rad CFX |

| Protein Language Model (Pre-trained) | The core AI engine for generating sequences or predicting properties from sequence. | ESM-2 (Meta), ProtGPT2 (Hugging Face) |

| Cloud/High-Performance Compute (HPC) Resource | Provides the computational power needed for model training, inference, and data analysis. | AWS, GCP, Azure, or local GPU cluster |

Overcoming Hurdles: Strategies for Robust and Deployable AI-Designed Proteins

Addressing Data Scarcity and Bias in Training Sets

In AI-driven protein design for therapeutics, the quality and quantity of training data are critical. Data scarcity limits model generalization, while bias can lead to designs with skewed properties, poor efficacy, or unforeseen immunogenicity. This document provides application notes and protocols to mitigate these issues.

Table 1: Common Data Sources for Protein Therapeutics AI & Inherent Limitations

| Data Source | Approx. Volume (Public) | Primary Biases | Typical Use Case |

|---|---|---|---|

| PDB (Protein Data Bank) | ~200k Structures | Over-represents stable, crystallizable proteins; under-represents membrane proteins, disordered regions. | Structure prediction, folding landscapes. |

| UniProtKB/Swiss-Prot | ~500k Manually Reviewed Sequences | Taxonomic bias (human, model organisms); functional bias towards well-characterized proteins. | Sequence-function relationships, language models. |

| Clinical Trial Databases (ClinicalTrials.gov) | ~400k Studies | Bias towards successful or ongoing trials; sparse negative results. | Efficacy & safety outcome prediction. |

| Patent Databases (e.g., USPTO) | Millions of Documents | Legal/novelty bias; often lacks detailed experimental data. | Identifying novel scaffolds & design spaces. |

Table 2: Impact of Data Augmentation Techniques on Model Performance (Example: Stability Prediction)

| Technique | Synthetic Data Generated | Test Set ΔAUROC (vs. Baseline) | Key Risk Mitigated |

|---|---|---|---|

| Random Mutagenesis (in silico) | 10x Original Set | +0.05 | Scarcity of unstable variants. |

| Structure-based Diffusion | 5x Original Set | +0.08 | Scarcity of novel folds. |

| Language Model Generation (e.g., ESM) | 20x Original Set | +0.12 | Phylogenetic & homology bias. |

| Experimental GAN on Physicochemical Space | 15x Original Set | +0.07 | Bias towards lab-measurable properties. |

Core Experimental Protocols

Protocol 1: Systematic Audit for Dataset Bias in Therapeutic Protein Datasets

Objective: Identify and quantify sources of bias in a collected dataset intended for training a protein property predictor.

Materials:

- Primary dataset (e.g., sequences, structures, or measured properties).

- Reference databases (e.g., UniProt for taxonomic distribution, PDB for structural classes).

- Statistical software (Python/R).

Procedure:

- Characterize Data Distribution: For each key attribute (e.g., organism source, protein family, experimental method), compute its frequency within your dataset.

- Define Reference Population: Establish the ideal, unbiased target population relevant to your therapeutic question (e.g., "all human secreted proteins").

- Quantify Divergence: Calculate divergence metrics (e.g., Kullback-Leibler divergence, Jensen-Shannon distance) between your dataset distribution and the reference population for each attribute.

- Correlate with Performance: Segment your hold-out test set by underrepresented attributes. Compare model performance (e.g., RMSE, AUC) across segments to identify blind spots.

- Document Audit: Create a bias audit report summarizing findings, which must accompany any published model.

Protocol 2: Generating Physicochemically-Informed Synthetic Sequences via Constrained Sampling

Objective: Expand training data for a specific protein fold or function while controlling for realistic physicochemical properties.

Materials:

- A multiple sequence alignment (MSA) of the target protein family.

- A pretrained protein language model (e.g., ESM-2, ProtGPT2).

- Property prediction tools (e.g., Aggrescan3D, NetsurfP3).

- Defined target ranges for key properties (e.g., hydrophobicity index, aggregation propensity, disorder score).

Procedure:

- Condition the Model: Fine-tune or prompt the base language model on your target MSA to capture family-specific motifs.

- Set Sampling Constraints: Define logical constraints (e.g., must contain active site residues X, Y, Z) and soft property targets.